We Won a SEED Grant in 2022 with Georgia State University

Empirical Education began serving as a program evaluator of the teacher residency program, Collaboration and Reflection to Enhance Atlanta Teacher Effectiveness (CREATE), in 2015 under a subcontract with Atlanta Neighborhood Charter Schools (ANCS) as part of their Investing in Innovation (i3) Development grant. In 2018, we extended this work with CREATE and Georgia State University through the Supporting Effective Educator Development (SEED) Grant Program, through the U.S. Department of Education. In 2020, we were awarded additional SEED grants to further extend our work with CREATE.

Last month, in October 2022, we were notified that this important work will receive continued funding through SEED. CREATE has proposed the following goals with this continued funding.

- Goal 1: Recruit, support, retain compassionate and skilled educators via residency

- Goal 2: Design and enact transformative learning opportunities for experienced educators, teacher educators, and local stakeholders

- Goal 3: Sustain effective and financially viable models for educator recruitment, support, and retention

- Goal 4: Ensure all research efforts are designed to benefit partner organizations

Empirical remains deeply committed to designing and executing a rigorous and independent evaluation that will inform partner organizations, local stakeholders, and a national audience of the potential impact and replicability of a multifaceted program that centers equity and wellness for educators and students. With this new grant, we are also committed to integrating more mixed method approaches to better align our evaluation with CREATE’s mission, and to contribute to recent conversations about what it means to conduct educational effectiveness work with an equity and social justice orientation.

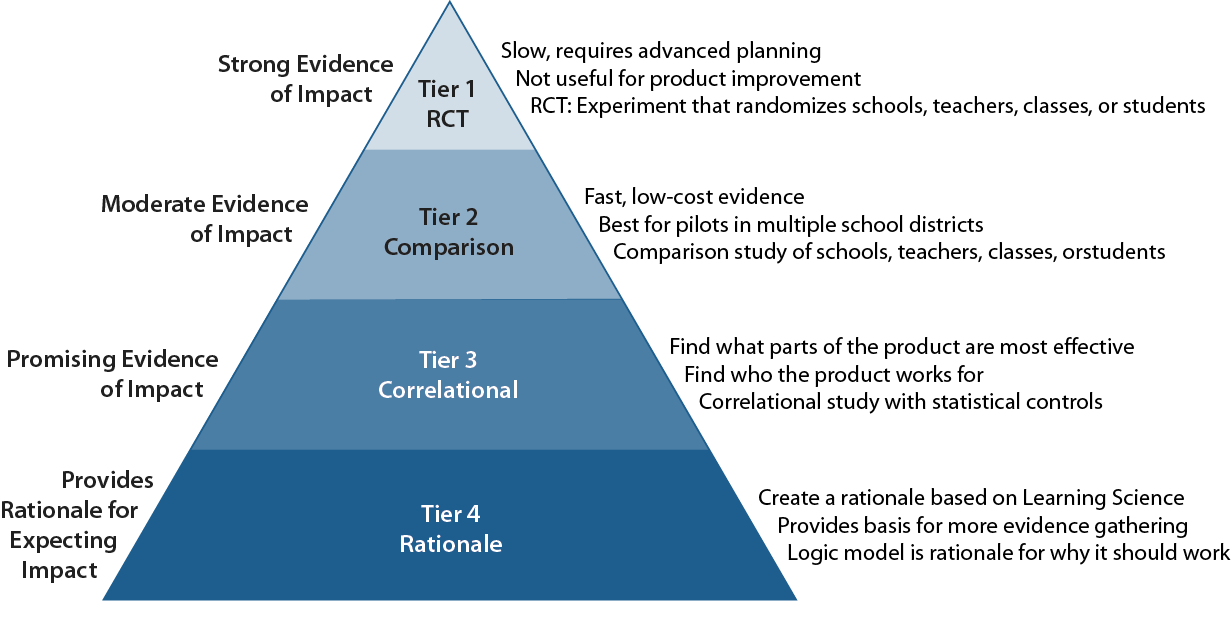

Using a quasi-experimental design and mixed-methods process evaluation, we aim to understand the impact of CREATE on teachers’ equitable and effective classroom practices, student achievement, and teacher retention. We will also explore key mediating impacts, such as teacher well-being and self-compassion, and conduct a cost-effectiveness and cost-benefit analysis. Importantly, we want to explore the cost-benefit CREATE offers to local stakeholders, centering this work in the Atlanta community. This funding allows us to extend our evaluation through CREATE’s 10th cohort of residents, and to continue exploring the impact of CREATE on Cooperating Teachers and experienced educators in Atlanta Public Schools.